Feature Extraction and Image Descriptors

Understanding key techniques for image feature representation, from fundamental concepts to advanced methods

What is Feature Extraction?

Feature extraction is the process of detecting, describing, and representing salient characteristics of an image in a numerical or symbolic form. The goal is to reduce the dimensionality of the original image data (often consisting of millions of raw pixels) into a more compact and discriminative representation. These features may capture color, shape, texture, or other domain-specific attributes that are relevant for tasks like classification, object recognition, registration, and image retrieval.

In essence, feature extraction translates raw pixel intensities into descriptors or signatures that can be more easily interpreted or compared by machine learning algorithms, thereby facilitating tasks such as image matching, pattern analysis, and decision-making.

Importance of Feature Extraction

Feature extraction plays a critical role in computer vision and image processing because it:

-

Reduces Data Complexity:

By focusing on the most relevant aspects (e.g., edges, contours, texture statistics), we ignore redundant pixel-level information, leading to faster and more memory-efficient processing. -

Improves Performance:

High-quality features can significantly boost the accuracy and robustness of machine learning or deep learning models. Good features often determine the difference between success and failure in tasks like object detection or medical diagnosis. -

Facilitates Comparisons:

Consistent descriptors (e.g., local keypoints) enable matching of the same object or pattern across different viewpoints, scales, or lighting conditions, thereby supporting tasks like image alignment, scene stitching, and recognition.

Types of Features

Although features can vary in their mathematical formulation, they are often conceptually grouped as:

-

Global Features:

Describe the entire image holistically. Examples include color histograms, global texture descriptors (e.g., overall frequency content), or moments capturing statistical information about pixel intensities. -

Local Features:

Characterize small regions or keypoints within the image. These features can capture fine-grained details and are more robust to occlusion, illumination changes, and other variations. Typical examples include SIFT keypoints, ORB descriptors, and corner detectors.

Common Feature Extraction Techniques

A wide array of feature extraction methods exist, each suited for different applications and image conditions. Below are some of the most commonly used techniques, along with brief code snippets in Python and MATLAB.

1. Edge Detection

Edge detection highlights the boundaries of objects by capturing sharp changes or discontinuities in intensity. It is often an important first step in further shape or contour analysis.

-

Sobel Operator:

Computes the gradient magnitude and direction in orthogonal directions (typically x and y). A fairly straightforward approach that is useful for quick edge detection and is computationally inexpensive. -

Canny Edge Detector:

A multi-stage algorithm incorporating noise reduction, gradient calculation, non-maximum suppression, and hysteresis thresholding. Known for its robustness in detecting true edges while minimizing false positives.

import cv2

# Load image in grayscale

image = cv2.imread('image.jpg', cv2.IMREAD_GRAYSCALE)

# Apply Canny edge detection

edges = cv2.Canny(image, 50, 150)

cv2.imwrite('edges.jpg', edges)

% Edge detection

I = imread('image.jpg');

Igray = rgb2gray(I);

edges = edge(Igray, 'Canny', [0.1 0.3]);

imwrite(edges, 'edges.jpg');

2. Texture Analysis

Texture features aim to characterize the repetitive or quasi-repetitive patterns in an image. They are particularly useful in applications like material classification, medical diagnostics (e.g., detecting abnormalities in tissue textures), and face recognition.

-

Gray Level Co-occurrence Matrix (GLCM):

Captures the frequency of co-occurrences of intensity values at specified spatial relationships (distance and angle). Statistical measures derived from the GLCM (e.g., contrast, correlation, energy, homogeneity) serve as texture descriptors. -

Local Binary Patterns (LBP):

Compares each pixel to its neighbors in a local region, encoding the pattern of intensity differences as a binary string. LBP is rotation-invariant and computationally efficient, making it popular for texture classification.

from skimage.feature import graycomatrix, graycoprops

import cv2

# Load or define a grayscale image

image = cv2.imread('image.jpg', cv2.IMREAD_GRAYSCALE)

# Compute GLCM

glcm = graycomatrix(image, distances=[1], angles=[0], levels=256,

symmetric=True, normed=True)

contrast = graycoprops(glcm, 'contrast')[0, 0]

print("Contrast:", contrast)

% Compute GLCM in MATLAB

I = imread('image.jpg');

Igray = rgb2gray(I);

offsets = [0 1]; % Horizontal neighbor

glcm = graycomatrix(Igray, 'Offset', offsets, 'Symmetric', true);

stats = graycoprops(glcm, 'Contrast');

contrast = stats.Contrast;

disp(['Contrast: ', num2str(contrast)]);

3. Shape Descriptors

Shape-based features describe the geometric properties of objects in an image. They often rely on boundary or contour extraction, enabling tasks such as object classification by shape, pose estimation, or defect detection.

-

Contours:

A sequence of points forming the boundary of an object. Extracting contours is typically done on a binarized or segmented image. -

Hu Moments:

Seven moment invariants (originally introduced by Hu) that remain relatively constant under translation, rotation, and scaling. Useful for shape recognition when objects have consistent contours but may appear at different scales or orientations.

import cv2

import numpy as np

# Load binary or thresholded image

image = cv2.imread('image.jpg', cv2.IMREAD_GRAYSCALE)

# Find contours

contours, hierarchy = cv2.findContours(image, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

# Draw contours on a color copy

color_image = cv2.cvtColor(image, cv2.COLOR_GRAY2BGR)

cv2.drawContours(color_image, contours, -1, (0, 255, 0), 2)

cv2.imwrite('contours.jpg', color_image)

# Compute Hu Moments for the first contour (example)

if contours:

moments = cv2.moments(contours[0])

huMoments = cv2.HuMoments(moments)

print("Hu Moments for first contour:", huMoments.flatten())

% Find contours and compute shape descriptors in MATLAB

I = imread('image.jpg');

Igray = rgb2gray(I);

BW = imbinarize(Igray);

BW = imfill(BW, 'holes');

% Extract boundaries

boundaries = bwboundaries(BW, 'noholes');

Ioverlay = I;

for k = 1:length(boundaries)

boundary = boundaries{k};

for n = 1:size(boundary, 1)

row = boundary(n,1);

col = boundary(n,2);

Ioverlay(row, col, :) = [0, 255, 0]; % Draw boundary in green

end

% Compute moments (using regionprops)

stats = regionprops(BW, 'Moments');

% (regionprops can also give Hu moments if needed)

end

imwrite(Ioverlay, 'contours.jpg');

4. Keypoint Detection and Matching

Keypoints (a.k.a. interest points or salient points) are well-defined, highly distinctive points in an image. They form the basis for many image alignment, stitching, and recognition algorithms, as each keypoint can be associated with a local descriptor that is invariant (or robust) to transformations such as rotation or scaling.

-

SIFT (Scale-Invariant Feature Transform):

A pioneering algorithm that detects keypoints at multiple scales and generates robust descriptors based on local gradient distributions. -

SURF (Speeded-Up Robust Features):

A more computationally efficient variant of SIFT that uses integral images and approximations of Gaussian derivatives. -

ORB (Oriented FAST and Rotated BRIEF):

An open-source-friendly algorithm (not encumbered by patents like SIFT/SURF). Combines the FAST keypoint detector with the BRIEF descriptor, with orientation compensation to handle in-plane rotations.

import cv2

# ORB keypoint detection

image = cv2.imread('image.jpg', cv2.IMREAD_GRAYSCALE)

orb = cv2.ORB_create()

keypoints, descriptors = orb.detectAndCompute(image, None)

output = cv2.drawKeypoints(image, keypoints, None, color=(0,255,0))

cv2.imwrite('keypoints.jpg', output)

% ORB keypoint detection in MATLAB

I = imread('image.jpg');

Igray = rgb2gray(I);

points = detectORBFeatures(Igray);

[features, validPoints] = extractFeatures(Igray, points);

% Create an output image showing keypoints

output = insertMarker(I, validPoints.Location, 'circle', 'Color', 'red');

imwrite(output, 'keypoints.jpg');

5. Color Descriptors

Color-based features quantify the distribution and relationships of colors within an image. They play an essential role in applications like content-based image retrieval (CBIR), scene understanding, and object detection in color-based contexts.

-

Color Histograms:

Summarize the frequency of each color or intensity level. This global descriptor can be computed efficiently and is rotation- and scale-invariant, but sensitive to large illumination changes. -

Color Moments:

Measures (e.g., mean, variance, skewness) that provide a compact representation of the color distribution. Particularly useful when a histogram-based approach might be too coarse or high-dimensional.

import cv2

import numpy as np

image = cv2.imread('image.jpg') # in BGR by default

# Compute grayscale histogram (for demonstration)

hist = cv2.calcHist([cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)], [0], None, [256], [0,256])

cv2.normalize(hist, hist, alpha=0, beta=1, norm_type=cv2.NORM_MINMAX)

print("Normalized Grayscale Histogram:", hist.flatten())

% Compute grayscale histogram in MATLAB

I = imread('image.jpg');

if size(I,3) == 3

I = rgb2gray(I);

end

counts = imhist(I, 256);

counts = counts / sum(counts); % Normalize the histogram

disp('Normalized Grayscale Histogram:');

disp(counts');

Applications of Feature Extraction

Feature extraction underpins a wide variety of real-world image processing and computer vision applications:

-

Object Recognition:

Classifying or identifying specific objects in images (e.g., faces, vehicles, landmarks) by matching extracted features to known models or reference data. -

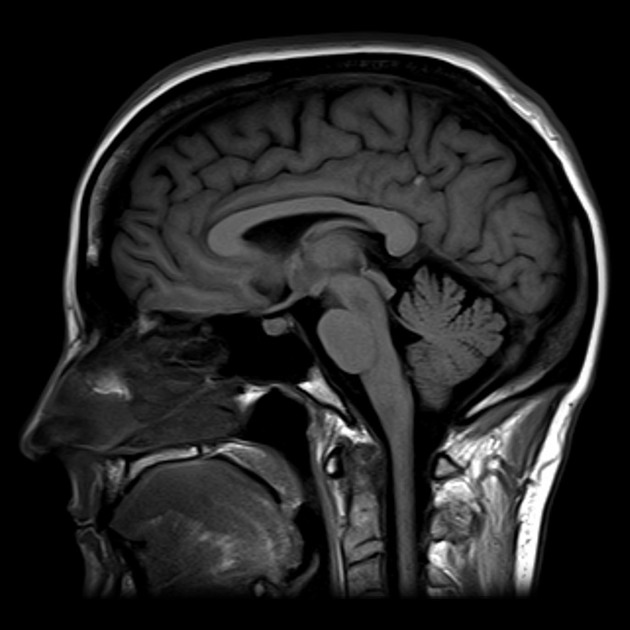

Medical Imaging:

Detecting subtle patterns or abnormalities (tumors, lesions, microcalcifications) through texture features or shape descriptors in radiological scans. -

Face Recognition:

Facial landmark detection (e.g., eyes, nose, mouth) and descriptor computation to enable person identification in security or social media applications. -

Image Retrieval:

Searching large databases by comparing extracted features (color histograms, keypoints) with a query image, a technique known as Content-Based Image Retrieval (CBIR).

Challenges and Considerations

Although feature extraction can greatly simplify complex image data, several hurdles remain:

-

Invariance Issues:

Features should be robust to variations in scale, rotation, viewpoint, and illumination. Designing or choosing features that remain consistent under all relevant transformations is challenging. -

Computational Efficiency:

Some feature extraction algorithms (e.g., SIFT) can be computationally expensive for large datasets or real-time applications. More efficient alternatives like ORB or hardware acceleration may be necessary. -

High-Dimensional Data:

Complex descriptors (especially those involving multiple channels or large patch sizes) can explode in dimensionality, requiring dimensionality reduction or careful regularization. -

Task-Dependent Suitability:

The “best” feature descriptor can vary significantly depending on the image domain (natural scenes vs. medical images), data characteristics (color vs. grayscale), and final application (e.g., retrieval vs. classification).

Further Learning Resources

For a deeper exploration of feature extraction methods and best practices, consult the following references:

- OpenCV - Computer vision library – Offers a comprehensive set of feature detectors and descriptors (SIFT, SURF, ORB, etc.).

- scikit-image - Image processing in Python – Includes GLCM, LBP, and other classical methods in a Pythonic API.

- Feature Extraction (Wikipedia) – High-level overview and links to related research articles.

- Kaggle - Image Processing Datasets – Practice real-world feature extraction and classification tasks on open datasets.

- Digital Image Processing by Gonzalez & Woods – A classic textbook that covers foundational feature extraction theories and algorithms.

Interactive Demos

Local Binary Patterns (LBP) is a simple yet efficient texture descriptor. For each pixel, it looks at the 3×3 neighborhood, compares each neighbor's intensity to the center pixel, and forms an 8-bit binary code. We then convert that code to a decimal value, which can be visualized (often used for texture analysis).

For each pixel (x, y), we collect the intensities of its neighbors in a circular or square neighborhood (here, a 3×3 block for radius=1). Each neighbor that is >= the center pixel is assigned a bit value of 1; otherwise, 0. These bits form an 8-bit number, which we map to a grayscale value to display the LBP pattern.

A color histogram is one of the simplest global descriptors. It counts how often each color value appears. Below, we compute a histogram for each channel (Red, Green, Blue) and display it as a simple bar chart.

We read the pixel values from the original image. For each channel (R, G, B), we increment the appropriate bin index based on the pixel’s intensity. Then we normalize the counts and draw a bar chart for each channel in a different color.